How Google Search Works

.svg)

.svg)

Getting to know the basics of search engines is an obvious starting place to understand Search Engine Optimization (SEO). Most people use Google, so we’ll focus on how Google search works.

Before you search (and as a continuous process[1]), Google scours the internet and keeps a record of the pages it finds. This occurs via two processes called crawling and indexing. The result is a large database of downloaded and analyzed website content. When a search is made, Google interprets the intent of the query. Then it retrieves websites from that database and orders the results based on many ranking factors[2]. For a comprehensive understanding of these factors, explore our in-depth guide, Decoding Google’s 200+ Ranking Factors.

Here, we’ll go into greater detail on how crawling, indexing, query interpretation, and ranking work.

.avif)

Before Search - Web Crawling and Indexing

Web crawlers (aka bots or spiders) are programs that download and store information from websites[3]. The Google bot starts with a list of known URLs and then follows some of the links that it discovers on those pages. It also runs any Javascript on the page (meaning it runs the code to render dynamic content) and makes a copy of the pages it finds[1] [4]. Ultimately, page components like images, videos, and text are downloaded and stored[4]. This information is kept in a massive database called an index[4]. The size of Google’s index is approximately 100,000,000 GB[1]. Learn more about the crawling process in our detailed article, "How Google Crawls Websites (And How to Make Sure Your Site Gets Found)".

During the indexing process, the algorithm analyzes and interprets what it has found so that it can serve up relevant information later on[4].

When we say your web page indexed, that means it could show up in Google search results. You can estimate how much of your site has been indexed by typing “site:your-website-domain” into the Google address bar[5]. For example, if I look at AllRecipes.com with “site:www.allrecipes.com”, I currently get 96,700 results, signifying that about that many pages have been indexed.

Common Issues that Hurt Web Crawling and Indexing

The first step to ranking on Google is to make sure your website is being crawled and indexed. A page that isn’t indexed and crawled is not going to end up on a Google results page, no matter how relevant or high-quality it is.

Here are some common issues:

Haven’t Submitted an XML Sitemap

An XML sitemap is essentially a list of pages in your website formatted in extensible markup language (XML)[6]. You can submit a sitemap to Google to directly tell it what to crawl. You can make this sitemap automatically by using XML sitemaps. This tool makes your sitemap using your website’s URL[7].

If you don’t submit a sitemap, there is a good chance that only part of your website will be discovered and crawled. Using a sitemap can also help the bot index your new or updated content earlier, so you spend less time shouting into the void[8].

Server issues

A simplified way to look at the internet is as billions of computers serving (giving) and retrieving information with each other in a network. When you visit a website, that website exists on a computer called a server in a set of folders and files (i.e. HTML, CSS, and Javascript files and images)[9].

Your web browser requests pages from that server using HTTP requests[9]. If everything goes well, that information will be sent back to the browser with a response status code signifying that everything is working fine. If there is an issue with the server, the response will have a number between 500 and 599[10]. Errors like these can disrupt web crawling as well as 404 (“not found”) errors[11].

To find these errors, you can use Google Search Console[11]. If you get a list of URLs with server issues, start first by clicking on each one to see if the page loads. If it does, Google likely just has outdated information[12].

Issues with robots.txt

A website file called robots.txt can be used to prevent crawlers from accessing certain pages[4]. The main purpose of it is to make sure there aren’t too many bots crawling the site. That might sound counter intuitive, but having too many bots at once can overload a page[13]. You also want to prevent bots from exploring pages that aren’t public[14].

You can manually control whether a crawler can browse a page by changing this file[4].

To prevent a crawler from exploring all pages[7]:

User-agent: *

Disallow: /

To prevent a crawler from exploring pages in a specific folder called “blog”[7]:

User-agent: *

Disallow: /blog/

To allow all pages to be crawled[7]:

User-agent: *

Allow: /

Redirect Loops and Chains

Another crawler death trap is something called a redirect loop. This is when pages mutually redirect to each other. This can trap the bot in redirect purgatory until it abandons its crawling attempt[7]. A similar redirect issue is the redirect chain, which chains together several URLs that redirect from one to the other (like A ⇨ B ⇨ C ⇨ D)[15].

If crawlers spend time on redirect chains and loops, they might end up skipping pages they would have reached otherwise. Crawlers use something called a crawl budget which limits how many pages the bot is going to crawl. You don’t want that budget taken up by redirects[15].

“nofollow” or “noindex" Meta Tag

If you don’t want the web crawler to discover new links on a webpage, you should add a “nofollow” meta tag ( <meta name=” robots” content=”nofollow”>). Be sure to not accidentally copy this tag over all your pages[7].

Likewise, a “noindex” tag will prevent indexing (<meta name = “robots” content = “noindex”>). If you keep a “noindex” tag for a long time it will eventually be treated as a “nofollow”[7].

Broken Links

Broken links aren’t followable, so that will also affect the crawlability of your site[7]. Regularly check your links to make sure they work.

Poor Site Architecture

Web crawlers can also be thwarted by having your site’s architecture set up in the wrong way. You should be able to get to any page of your site from home by just clicking a few links[7]. This is known as “flat” structure.

.avif)

Orphan Pages

Orphan pages are pages that don’t have internal links pointed to them. Think of the crawlers as following a URL to get from page to page of your site. If your web page doesn’t link to your other web pages that page becomes an orphan because nothing is linking to it. There should be a path of links to reach all of your pages[7].

During Search: Retrieval and Ranking

How Does Google Understand What I Want?

Google uses AI and machine learning to determine the user’s intent when they search[16]. It doesn’t merely match keywords, although keywords are still important [16]. Google search has had several algorithm updates over the years such as:

- Synonym matching, which means Google can retrieve a page that covers “affordable handbags and purses” when someone queries the less classy sounding “cheap purse”[1].

- The RankBrain machine learning algorithm helps find related pages based on the concepts they cover so that the words in the query don’t have to be an exact match to the words on the page it retrieved[1].

- The BERT update (Bidirectional Encoder Representations from Transformers) is a neural network system used for natural language processing[1]. Natural language processing allows Google to somewhat understand human language.

How does Google Order Results?

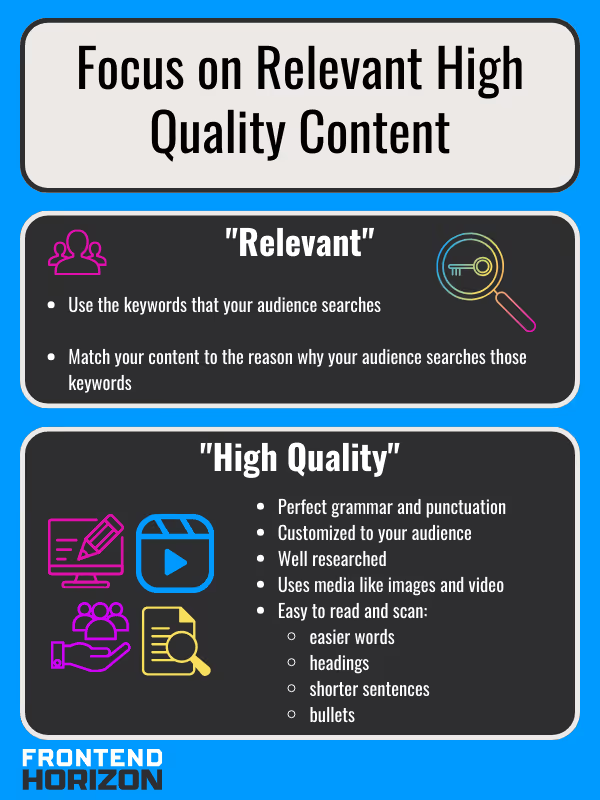

Google Search’s algorithm has ranking factors to order search results. The most important thing to remember is to keep publishing content that is good quality (26% of the weight). The second is the use of keywords in the meta title (17% weight)[2]. In another article, we cover an expansive list of over 200 Google ranking factors. However, it is good to keep in mind that the ranking is generally based on how relevant the web page is to the query, the quality of the article and website, and the authority of the website[2].

“Relevancy” depends on keywords and phrases that represent the intent of the searcher. Frequent (but not excessive) keyword usage on a page improves that score for a keyword as does having the keyword in the right place[16]. We’ll look deeper at what is meant by “right place for a keyword” in another article. However, generally speaking, the top positions are the URL, page title, meta description, and header tags[1].

“Quality” depends on a lot of things, but generally it means you are writing for people and not just search engine bots. This means providing useful information and making sure your content matches your titles and headers. It also means using citations from high-quality reputable sources and including plenty of images and videos (6-8 is ideal). Shoot for longer articles when there is enough to talk about[17]. I say “when there is enough to talk about” because you don’t want to sound like a college freshman who’s trying to fit the word count for a term paper with fluff.

The other side of quality is whether the site technically works well. For example, pages should load fast[18]. They should also work on mobile devices.

There are lots of ways to make low-quality content. One of the top ways to lose at Google is to try to cheat the system. Obviously, stealing someone else’s content to put it on your site (called “scraped content”) is low-quality. It also happens to be illegal[17] [19]. You can avoid this by always paraphrasing your resources and by using a citation manager (like Zotero) and a plagiarism checker.

Less insidious, but still low-quality is the creation of “thin content”. Thin content is like having a long conversation with someone who isn’t actually saying anything. In real life, it might mean they’re using cocaine or running for political office. Online, people might create thin content to gain website traffic that doesn’t serve customers[17][20]. It is similar to keyword stuffing, which is when you place as many keywords as you can into content while bastardizing written language into something that is unreadable[17].

The last major ranking category that is important is authority. Authority can be measured by how long a website has existed and whether other pages link to it [16]. According to Google’s algorithm, the more other authoritative websites link to you the more authoritative you are[1]. I include it last because it is something one has far less control over and because it's usually going to depend on how good your content is. People have to have a reason to link to your content in the first place.

Bullet Summary

- Google discovers new pages via web crawling. Page information is stored in an index for later retrieval.

- Make sure your site is crawlable and indexable

- Focus on making content that is good for users.

References

[1] “How Does Google Search Work? The Google Algorithm Explained,” WEBO Digital. Accessed: Sep. 27, 2023. [Online]. Available: https://webo.digital/blog/how-does-google-search-work/

[2] “Search Engine Optimization - Learn to Optimize for SEO,” WordStream. Accessed: Jul. 29, 2023. [Online]. Available: https://www.wordstream.com/seo

[3] “What is a web crawler? | How web spiders work,” Cloudflare. Accessed: Oct. 04, 2023. [Online]. Available: https://www.cloudflare.com/learning/bots/what-is-a-web-crawler/

[4] “In-Depth Guide to How Google Search Works | Google Search Central | Documentation,” Google for Developers. Accessed: Sep. 27, 2023. [Online]. Available: https://developers.google.com/search/docs/fundamentals/how-search-works

[5] “What Is Technical SEO? Basics and 10 Best Practices,” Semrush Blog. Accessed: Jul. 28, 2023. [Online]. Available: https://www.semrush.com/blog/technical-seo/

[6] M. Hendriks, “What is an XML sitemap and why should you have one?,” Yoast. Accessed: Oct. 11, 2023. [Online]. Available: https://yoast.com/what-is-an-xml-sitemap-and-why-should-you-have-one/

[7] “11 Crawlability Problems & How to Fix Them.” Accessed: Sep. 28, 2023. [Online]. Available: https://www.semrush.com/blog/crawlability-issues/

[8] “How to Submit a Sitemap to Google [& Why You Should].” Accessed: Oct. 21, 2023. [Online]. Available: https://www.tributemedia.com/blog/submit-your-sitemap-google

[9] “What is a web server? - Learn web development | MDN.” Accessed: Oct. 11, 2023. [Online]. Available: https://developer.mozilla.org/en-US/docs/Learn/Common_questions/Web_mechanics/What_is_a_web_server

[10] “HTTP response status codes - HTTP | MDN.” Accessed: Oct. 11, 2023. [Online]. Available: https://developer.mozilla.org/en-US/docs/Web/HTTP/Status

[11] “8 Crawlability Issues That Are Hurting Your SEO.” Accessed: Oct. 11, 2023. [Online]. Available: https://www.seoclarity.net/blog/crawlability-problems

[12] J. Marchwinski, “How to Solve Server Errors with Google Search Console,” Knowledgebase. Accessed: Oct. 21, 2023. [Online]. Available: https://theeventscalendar.com/knowledgebase/how-to-solve-server-errors-with-google-search-console/

[13] “Robots.txt Introduction and Guide | Google Search Central | Documentation,” Google for Developers. Accessed: Sep. 28, 2023. [Online]. Available: https://developers.google.com/search/docs/crawling-indexing/robots/intro

[14] N. Patel, “How to Create the Perfect Robots.txt File for SEO,” Neil Patel. Accessed: Oct. 11, 2023. [Online]. Available: https://neilpatel.com/blog/robots-txt/

[15] “4 Common Crawl Errors and Why You Need to Fix Them.” Accessed: Sep. 28, 2023. [Online]. Available: https://www.newbreedrevenue.com/blog/common-crawl-errors-and-why-you-need-to-fix-them

[16] “How Google Works,” HowStuffWorks. Accessed: Sep. 27, 2023. [Online]. Available: https://computer.howstuffworks.com/internet/basics/google.htm

[17] A. Hallan, “What is quality content and how does Google recognize it? - Credible Content Blog,” Credible Content Writing & SEO Copywriting Blog. Accessed: Oct. 11, 2023. [Online]. Available: https://credible-content.com/blog/quality-content-google-recognize/

[18] “The Three Pillars Of SEO: Authority, Relevance, And Experience,” Search Engine Journal. Accessed: Oct. 21, 2023. [Online]. Available: https://www.searchenginejournal.com/seo/search-authority/

[19] P. O. Power, “Scraped Content Explained | Page One Power.” Accessed: Oct. 11, 2023. [Online]. Available: https://www.pageonepower.com/search-glossary/scraped-content

[20] “What is Thin Content?” Accessed: Oct. 11, 2023. [Online]. Available: https://ahrefs.com/seo/glossary/thin-content

.svg)

.svg)

.svg)

.svg)

.svg)